Responsible AI in Practice: Lessons from Experience at Scale

→

European Center for Algorithmic Transparency / Joint Research Centre

Navigating the Cyber Minefields

→

Participation in "Navigating the Cyber Minefields" panel at Bioneers 2023 conference with Cindy Cohn and Randima Fernando, moderated by Kellen Klein.

Ethics in AI

→

Innovit (Italian Innovation and Culture Hub)

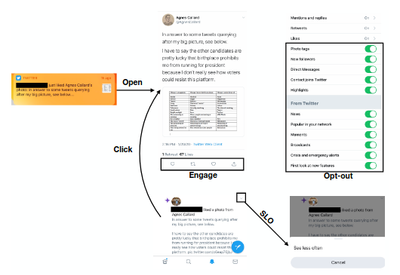

Algorithmic amplification of politics on Twitter

→

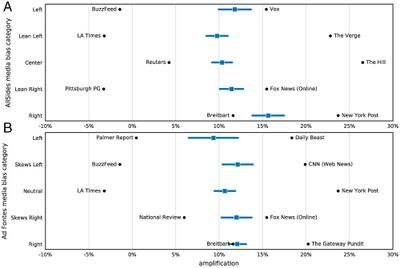

Based on a massive-scale experiment involving millions of Twitter users, this study carries out the most comprehensive audit of an algorithmic recommender system and its effects on political content. Results unveil that the political right enjoys higher amplification compared to the political left.

Algorithmic Amplification of Politics on Twitter

→

Brown Data Science Initiative 2022

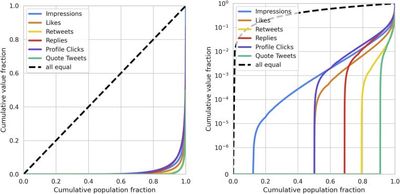

Measuring disparate outcomes of content recommendation algorithms with distributional inequality metrics

→

In recent years, many examples of the potential harms caused by machine learning systems have come to the forefront. Practitioners in the field of algorithmic bias and fairness have developed a suite of metrics to capture one aspect of these harms.

From Optimizing Engagement to Measuring Value

→

Most recommendation engines today are based on predicting user engagement, e.g. predicting whether a user will click on an item or not. However, there is potentially a large gap between engagement signals and a desired notion of "value" that is worth optimizing for.

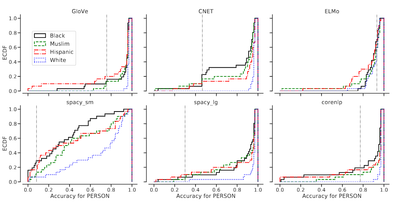

Assessing demographic bias in named entity recognition

→

Named Entity Recognition (NER) is often the first step towards automated Knowledge Base (KB) generation from raw text. In this work, we assess the bias in various Named Entity Recognition (NER) systems for English across different demographic groups with synthetically generated corpora.